Hello again everyone!

Last month, I discussed some really interesting topics at the intersection between psychiatry and pathology—two fields that aren’t exactly the closest; more so “diverged” in the hospital milieu as if in a poem by Robert Frost. This month I’d like to bring the conversation back to a topic I’ve addressed before: improving multidisciplinary medicine and creating a Just Culture in medicine.

Not exactly culture with a swab or agar dish, a Just Culture is an all-encompassing term for system-based thinking and process improvement not at the expense of individuals. In a post I made last July, the topic of high reliability organizations (or HROs) is one that addresses communication and accountability in high stakes environments—like healthcare!

Just Culture isn’t a stranger to lab medicine. The American Society of Clinical Laboratory Science (ASCLS) published a position paper in 2015 utilizing this trending healthcare buzzword. On the subject of patient safety, ASCLS believes “Medical Laboratory Professionals must adopt a ‘fair and just culture’ philosophy, recognizing that humans make errors, and understanding the science of safety and error prevention.” (Source: ASCLS 2015, https://www.ascls.org/position-papers/185-patient-safety-clinical-laboratory-science) We all know how we maintain patient safety in the lab, right? We do that through quality control, QA measures, competencies (both internal and from accrediting bodies like CAP), and continuing education. Raise your hand if your lab is getting inspected, just finished getting inspected, will be inspected soon, or if you’ve recently done competency/proficiency testing yourself, CE courses for credentialing, or are reading this blog right now! We’re all “continuing” our education in health care ad infinitum because that’s how it works—we keep learning, adjusting, and ensuring best practices concurrent with the latest knowledge. And, instead of punishing lab professionals when we make errors, we try to be transparent so that each error is a learning opportunity moving forward.

I’m currently in my OB/GYN rotation at Bronx-Care and during the most recent Grand Rounds we had someone talk about “Just Culture”—a sort of continuation on the themes of the same lecture series that inspired my article on HROs. Essentially, the theme is that disciplining employees for violating rules or causing error(s) in their work is less effective than counseling, educating, and system-oriented and best-practice-informed care. In this talk, we watched a short video (embedded below) which walked us through approaching faults or errors in medicine in a way that empowers and educates. A story from MedStar Health, a Maryland-based health system, demonstrates how systems-based thinking can be the best way to solve problems in healthcare.

Annie, a nurse in the MedStar Hospital system, is the spotlight story in this video. She came across an error message on a glucometer after checking someone who was acutely symptomatic. She double checked it and made clinical decisions, with her providing team, to give insulin. This sent the patient into a hypoglycemic event which required ICU support. In the story, she was actually suspended and reprimanded for her “neglect”—other nurses made the same error just days later. This prompted some action, inciting nursing managers and other administrators to investigate further, ultimately involving the biomedical engineers from the company to weigh in on this systemic fault in glucose POCT. Annie returned to work, and the problem was recognized as not user-error, but system error; she went on to talk about how she felt unsure of her clinical competency after being reprimanded. Imagine if you accidentally reported the presence of blast cells in a manual differential in a pediatric CBC while you were alone on a night shift only to find out from the manager on days that you made a pretty big mistake with clinical implications. Then imagine you were suspended for a few weeks instead of simply asked to explain and identify opportunities to increase your knowledge. Pretty harsh, right? I’m glad the MLS who did that didn’t lose his job and only had to do a few more competency trainings…yep.

Fine. It was me. I mentioned mistakes in my discussion on HROs and discussed that particular mistake in part of a podcast series called EA Shorts with a clinical colleague of mine. Everyone makes mistakes, especially in training, and that’s okay! It’s how we deal with them that matters.

Anyone else notice a stark absence of professional laboratory input in the video? I assume many of you sharp-sighted lab automation veterans didn’t miss the glaring “LO” behind the dialogue box on the glucometer. And, to me, that begs the question: was there any lab input on this instrument, its training, or its users? Nurse Annie made a mistake—but she’s not alone, according to a Joint Commission study from November last year, close to 11% of users make mistakes when prompted with error messages compared to 0% of users misinterpreting normal values on screens of a particular model of glucometer. And that’s just one type of instrument. Imagine 1 in 10 nurses, medical assistants, or patients misinterpreting their glucose readings. (Source: The Joint Commission Journal on Quality and Patient Safety 2018; 44:683–694 Reducing Treatment Errors Through Point-of-Care Glucometer Configuration) This should also be a good opportunity to remind us all of CLIA subpart M, the law that outlines who can accredit, use, and report point-of-care results. Herein lies another problem, stated well by the American Association for Clinical Chemistry (AACC) in 2016, “… another criteria for defining POCT—and possibly the most satisfactory definition from a regulatory perspective—is who performs the test. If laboratory personnel perform a test, then this test typically falls under the laboratory license, certificate, and accreditation, even if it is performed outside of the physical laboratory space, and regardless of whether the test is waived or nonwaived. On the other hand, waived or nonwaived laboratory tests performed by non-laboratory personnel are nearly always subject to a different set of regulatory and accreditation standards, and these can neatly be grouped under the POCT umbrella,” and that can mean trouble when we’re all trying to be on the same clinical page.

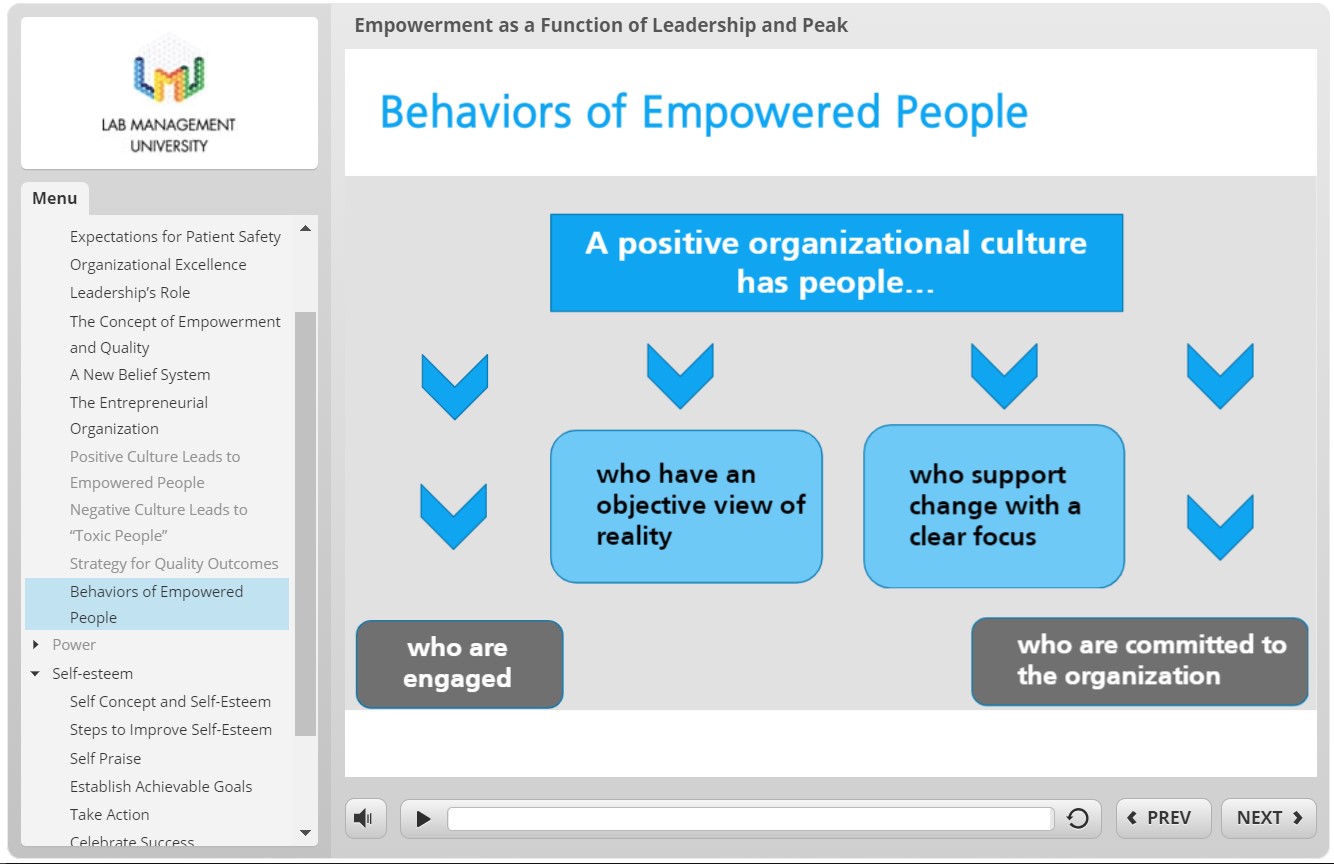

In previous posts, I’ve mentioned the excellent knowledge contained within the Lab Management University (LMU) program. One of the modules I went through discussed this topic exactly: Empowerment as a Function of Leadership and Peak Performance. In short, if we want to be good leaders in the lab, we have to set expectations for positive patient outcomes, including safety. Good leadership should empower their staff with education, support, and resources. Poor management can create toxic environments with staff that can be prone to mistakes. If we can be dynamic leaders, who adapt to ever-improving best practices and respond with understanding and compassion to mistakes, then our colleagues become just as reliable as your favorite analyzer during that CAP inspection I mentioned.

I often get clinician input about how the processes between the bedside and the lab can be improved. Often, they include comments about the need to share relevant clinical data for improving diagnostic reporting or improving a process between specimen collection and processing. But what often gets left out is the human element: the scientist behind the microscope, the manager behind the protocol, and the pathologist behind the official sign out report. Let’s continue to incorporate all of the feedback our colleagues provide while maintaining a safe and empowered culture for ourselves, our staff, and our patients.

What do you think? How does your lab, hospital, clinic, etc. address POCT safety or patient safety at large? Do you operate within a Just Culture? Share and comment!

Thanks and see you next time!

–Constantine E. Kanakis MSc, MLS (ASCP)CM graduated from Loyola University Chicago with a BS in Molecular Biology and Bioethics and then Rush University with an MS in Medical Laboratory Science. He is currently a medical student actively involved in public health and laboratory medicine, conducting clinicals at Bronx-Care Hospital Center in New York City.